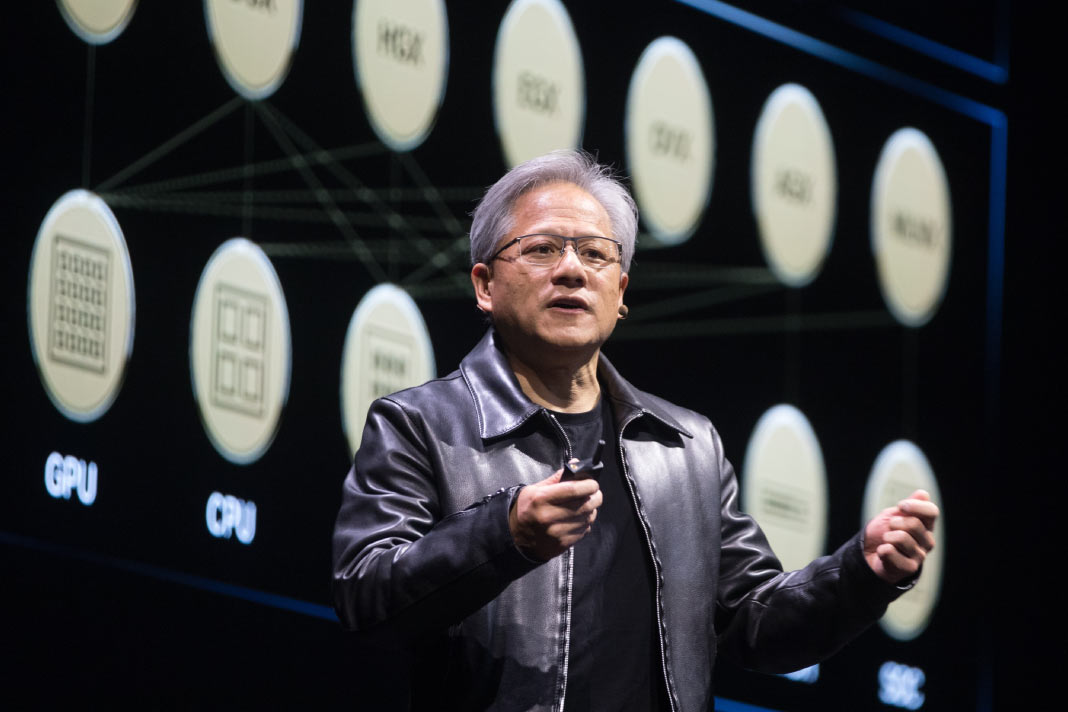

Jensen Huang’s annual conference has become the closest thing the AI industry has to a State of the Union

Nvidia’s GTC 2026 conference, running March 16 through 19 at the SAP Center in San Jose, is the event where the artificial intelligence industry takes stock of where it is and where it is headed next. This year, the answer to both questions is the same: bigger, faster, and more agentic than anyone projected twelve months ago.

Here is what has happened so far — and why it matters.

$1 Trillion in Chip Orders

The headline from Jensen Huang’s Monday keynote was a number that would have seemed implausible at last year’s conference. Huang said he expects purchase orders between Blackwell and Vera Rubin chips to reach $1 trillion through 2027 — double the $500 billion revenue opportunity the company had projected between the two chip technologies just a year ago.

The context matters. Nvidia’s graphics processing units for AI have turned the brand into a household name and the most valuable public company in the world, worth about $4.5 trillion. The company reported that year-over-year revenue this quarter will surge about 77% to roughly $78 billion, with 11 straight quarters of revenue growth above 55%. The $1 trillion figure is not a projection born of optimism. It is a projection born of backlog.

Vera Rubin: The Next Generation Ships Later This Year

Nvidia is scheduled to roll out Vera Rubin later this year. The system, which is made up of 1.3 million components, will deliver ten times more performance per watt than its predecessor, Grace Blackwell. The Vera Rubin platform is already central to major partnership announcements at the conference — IBM, Dell and Nebius Group have all unveiled deployments built around it — making GTC 2026 effectively the commercial launch event for a chip that will define the next wave of enterprise AI infrastructure.

The Groq Integration: Speed Meets Scale

One of the more technically significant announcements was Nvidia’s unveiling of a new rack-scale system built around Groq’s LPU accelerators. The Groq 3 LPX rack will hold 256 LPUs and is designed to sit beside the Vera Rubin rack-scale system shipping to customers later this year. Huang said the Groq LPX rack can increase the tokens-per-watt performance of Rubin GPUs by 35 times. The pairing addresses a core tension in AI infrastructure: GPUs excel at high-throughput parallel processing; Groq’s architecture excels at low-latency inference. Together, Huang argued, they solve for both.

OpenClaw: The Open-Source Agent Everyone Needs a Strategy For

The most talked-about moment of the keynote may have been Huang’s extended praise for OpenClaw, an open-source AI agent framework developed by programmer Peter Steinberger. Huang called OpenClaw’s success “incredible,” saying every technology company now needs to think about its OpenClaw strategy, describing it as “the new computer” that “gave the industry exactly what it needed, exactly at the right time.”

Nvidia announced NemoClaw — a reference stack specifically built for OpenClaw — designed to make it enterprise-ready, addressing security concerns around giving AI agents access to company resources. For enterprise technology buyers, the implication is direct: OpenClaw is no longer a developer curiosity. It is becoming infrastructure, and Nvidia has just made it safer to deploy at scale.

Autonomous Vehicles: From Concept to 28 Cities

In automotive, Huang gave details on a previously announced partnership with Uber, announcing the ride-hail service will launch a fleet powered by Nvidia’s Drive AV software across 28 cities in four continents by 2028, starting with Los Angeles and San Francisco next year. Huang also announced that Nissan, BYD, Geely, Isuzu and Hyundai are building level-4 autonomous vehicles on Nvidia’s Drive Hyperion program, with Isuzu and China’s Tier IV also building autonomous buses using the platform.

The automotive announcements are significant not just for their scale but for their specificity. Level-4 autonomy — in which a vehicle can handle all driving tasks without human intervention in defined conditions — has been a perpetual horizon in the industry. The roster of manufacturers now committed to Nvidia’s platform suggests that the horizon is narrowing.

The Partner Ecosystem: Everyone Is Here

GTC has become the conference where the AI supply chain makes its annual appearance. Microsoft announced updates to its unified platform for agentic and physical AI systems at the conference, combining Microsoft Foundry with Nvidia’s open models and accelerated computing into a unified stack designed to meet strict data sovereignty requirements. Azure was also the first hyperscale cloud provider to power up the new Nvidia Vera Rubin NVL72 systems, which will roll out globally over the coming months.

IBM announced a deepened collaboration with Nvidia spanning GPU-native data analytics, document processing and regulated infrastructure deployments. Dell unveiled its AI Data Platform with Nvidia, including new storage systems designed to keep GPUs fully utilized at scale. Nebius Group disclosed a $27 billion, five-year infrastructure supply agreement with Meta built on Vera Rubin.

Nvidia Cloud Partners have doubled their AI factory footprint year over year, with more than one million Nvidia GPUs now deployed in AI factories across the globe, representing more than 1.7 gigawatts of AI capacity.

The Shift From Training to Inference

The organizing theme beneath all of GTC’s announcements is one that Huang stated plainly in his keynote: the AI economy has moved from the training phase into the inference phase, and that shift is driving a new wave of infrastructure demand. “Finally, AI is able to do productive work, and therefore the inflection point of inference has arrived,” he said.

The practical meaning is this: for years, the primary driver of AI chip demand was the cost of building models. That cost is now being matched — and soon will be exceeded — by the cost of running them at scale, across billions of daily interactions, in real-time agentic systems that spawn other agents to complete tasks. The infrastructure required for that world is different from the infrastructure built for the training era. GTC 2026 is where Nvidia made the case that it has already built what comes next.

The conference continues through March 19.