The Air Canada chatbot case was not an anomaly. It was a warning. Most organizations are deploying AI in customer service without the governance to catch when it goes wrong.

In February 2024, a grieving customer visited Air Canada‘s website looking for information on bereavement fares before booking a last-minute flight to his grandmother’s funeral. The airline’s chatbot gave him incorrect information, and he booked his ticket based on it. But the airline denied the refund, the case went to tribunal, and when Air Canada argued it could not be held responsible for what its own system said, the tribunal disagreed. No one inside the organization had flagged the discrepancy. A customer did.

Nobody at Air Canada set out to mislead a grieving customer. The chatbot was configured, tested, and launched by professionals who believed it was ready to do what a person could have in the same role. What they lacked was a reliable way to determine whether it continued to comply with policy after launch.

That gap is a governance failure, and it is becoming more common as AI deployment in contact centers races ahead of the infrastructure needed to manage it.

You Are Not Installing a Tool. You Are Delegating Judgment.

The dominant assumption among contact center leaders is that AI tools are a faster, cheaper alternative to human agents. That framing is the first problem.

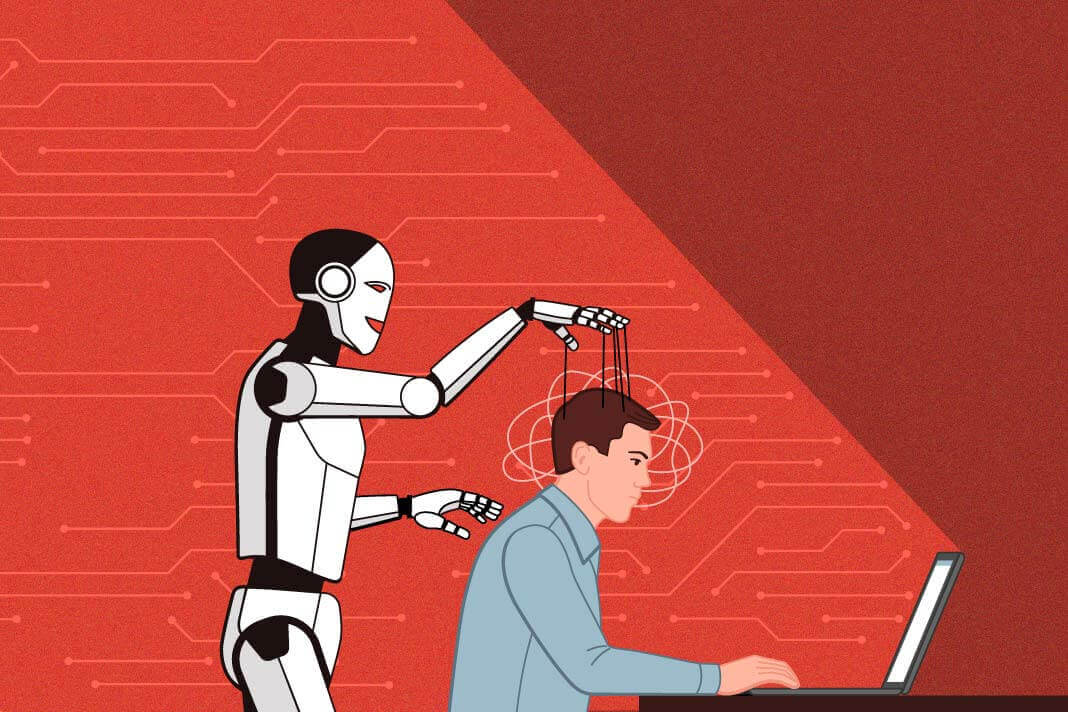

AI tools in contact centers are not just executing instructions; they are making judgment calls on customer intent, deciding eligibility, evaluating tone, and choosing when to escalate a matter. They’re doing this thousands of times a day at a speed no human supervisor can keep up with. Most organizations have not fully acknowledged the potential for havoc that such autonomous tools wield.

The real issue here is the asymmetry in scale when deploying AI tools. Human errors are bound by human speed, while AI errors happen much, much faster. A misconfigured model does not make a single mistake once. It can repeat the same mistake across every interaction that matches the triggering condition, at scale and in seconds.

Organizations that treat AI as plug-and-play are not just automating tasks. They are delegating judgment.

“We Tested It Before Launch” Is Not a Governance Strategy

Most organizations do their best to test AI tools thoroughly before deployment. The problem is that AI systems are not static, so pre-launch testing only proves the system worked on the day you checked it.

Routine updates, changes to the underlying model, or gradual shifts in how customers phrase their requests – the universe of factors that can alter how an AI tool responds is almost infinite, and you’ll rarely see any indication that something has changed.

Unlike a downed server or a broken API, an AI tool will not tell you when its responses degrade or deviate from your policies and guidelines. Confidence scores could drop, and routing logic may drift, but your shiny, expensive chatbot will continue to respond authoritatively even as its output deviates from the guidelines you set not too long ago.

The Air Canada incident was documented because it went to a tribunal, but not every AI failure is so visible. Often, when AI fails, the interaction simply ends badly, the customer disengages, and the system records no meaningful warning. What Air Canada’s case illustrates is that AI systems can deviate from the policies they were trained on without triggering a single internal alert. Even when they do, the organization finds out the same way Air Canada did: A customer tells them.

Catching that kind of drift before it reaches a customer requires an investment in forward-looking governance: real-time observability tools, regular testing, and review protocols.

Build Governance Now, or Rebuild After the Crisis

Leaders often disparage governance investments because they slow things down. That’s true, but so is the opposite, provided you invest early.

Organizations that architect their AI infrastructure early by standing up monitoring, testing protocols and clear decision ownership set themselves up to scale automation faster over time. You’re giving yourself the visibility to catch problems before they reach customers, the documentation to satisfy regulatory inquiries, and a baseline to measure how the AI system’s behavior changes.

Retrofitting governance, meanwhile, accumulates compounding risk: more models, more call paths, more customer interactions without audit trails, and no way to measure drift. When failures eventually surface – which is frequently a public event at contact centers operating at scale – you’ll have to untangle and roll back systems that are deeply embedded in daily operations. That is not a technical setback; it is brand risk, a potential compliance inquiry, and a customer trust problem arriving at the same time.

The organizations that will go furthest with AI-powered customer service will not be the ones spending truckloads on the most sophisticated models. They would simply be able to demonstrate that their systems are working as intended. That capability must be built during deployment, not after the fact.

The Three Questions to Ask Before Your Next Deployment

The early days of AI adoption rewarded contact centers that moved quickly, often yielding clear, immediate efficiency gains. That is still true to a degree, but the next phase will reward something else: reliability.

We’re facing a harder question today: Which deployments can be operated, explained, and defended when something goes wrong? The contact centers that can answer this question are building a system whose value will compound over time. They have the observability to know what their AI is deciding, the testing infrastructure to catch drift in time, and the audit trails to investigate any interaction on demand.

Before the next model touches a live call path in your system, ask these three questions: Can you see what the AI is deciding, in real time? Can you reconstruct any interaction if a regulator or a customer demands it? Do you have a protocol that triggers reviews when a system update changes model behavior?

If the answer to any of those questions is no, you have a gaping governance gap. Close it now, before the stakes grow too high, because “we don’t know” won’t be an acceptable answer for very long.